Implications of Advances in Machine Translation

By Cathy Deng | April 2, 2021

On March 16, graduate student Han Gao wrote a two-star review of a new Chinese translation of the Uruguayan novel La tregua. Posted on the popular Chinese website Douban, her comments were brief, yet biting – she claimed that the translator, Ye Han, was unfit for the task, and that the final product showed “obvious signs of machine translation.” Eleven days later, Gao apologized and retracted her review. This development went viral because the apology had not exactly been voluntary – friends of the affronted translator had considered the review to be libel and reported it to Gao’s university, where officials counseled her into apologizing to avoid risking her own career prospects as a future translator.

Gao’s privacy was hotly discussed: netizens felt that though she’d posted under her real name, Gao should have been free to express her opinion without offended parties tracking down an organization with power over her offline identity. The translator and his friends had already voiced their disagreement and hurt; open discussion alone should have been sufficient, especially when no harm occurred beyond a level of emotional distress that is ostensibly par for the course for anyone who exposes their work to criticism by publishing it.

Another opinion, however, was that spreading misinformation should carry consequences because by the time the defamed party could respond, often the damage was already done. Hence, the next question was: was Gao’s post libelous? Quality may be a matter of opinion, but machine translation came down to integrity. To this end, another Douban user extracted snippets from the original novel and compared Han’s 2020 translation to a 1990 rendition by another translator, as well as to corresponding outputs from DeepL, a website providing free neural machine translation. This analysis was conducive to two main conclusions: that Han’s work was often similar in syntax and diction to the machine translation, more so than its predecessor; and that observers agreed that the machine translation was, in some cases, superior to its human competition. The former may seem incriminating, but the latter much less so: after all, if Han had seen the automated translation, wouldn’t she make it better, not worse? Perhaps similarities were caused merely by lack of training (Han was not formally educated in literary translation).

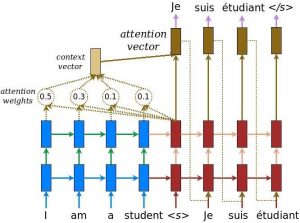

Researchers have developed methods to detect machine translations, such as assessing similarity between the text in question and its back-translation (e.g. translated from Chinese to Spanish, then back to Chinese). But is this a meaningful task for the field of literary translation? Machine learning has evolved such that models are capable of generating or translating text to be nearly indistinguishable from, or sometimes even more enjoyable than, the “real thing.” The argument that customers always “deserve” fully manual work is outdated. And relative to the detection of deep fakes, detecting machine translations is not as powerful in combating misinformation.

Yet I believe assessing similarity to machine translation remains a worthwhile pursuit. It may never be appropriate as a measure of professional integrity because the times of being able to ascertain whether the translator relied on automated methods are likely behind us. Similar to the way plagiarism detection tools are disproportionately harsh on international students, a machine detection tool for translation (currently only 75% accurate at best) may unfairly punish certain styles or decisions. Yet a low level of similarity may well be a fine indicator of quality if combined with other methods. If even professional literary translators might flock to a finite number of ever-advancing art machine translation platforms, it is the labor-intensive act of delivering something different that reveals the talent and hard work of the translator. Historically, some of the best translators worked in pairs, with one providing a more literal interpretation that the other then enriches with artistic flair; perhaps algorithms could now play the former role, but the ability to produce meaningful literature in the latter may be the mark of a translator who has earned their pay. After all, a machine can be optimized for accuracy or popularity or controversy, but only a person can rejigger its outputs to reach instead for truth and beauty – the aspects about which Gao expressed disappointment in her review.

A final note on quality: the average number of stars on Douban, like other review sites, were meant to indicate quality. Yet angry netizens have flooded the works of Han and her friends with one-star reviews, a popular tactic that all but eliminates any relationship between quality and average rating.

References

- https://www.deepl.com/en/translator

- https://arxiv.org/abs/1910.06558

- https://www.tensorflow.org/tutorials/text/nmt_with_attention

- https://www.douban.com/note/798396792/

- https://163.com/tech/article/G6APE3P700097U82.html

- https://sohu.com/a/458261206_665455/

- http://www.sixthtone.com/news/1007104/how-a-uruguayan-novel-broke-chinas-go-to-review-site