Dangers of Predicting Criminality

By Kritesh Shrestha | March 9, 2022

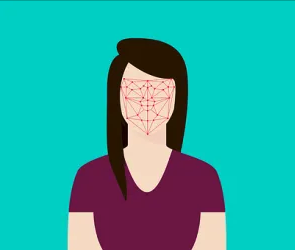

Facial recognition as a technology has seen major improvements within the last 5 years, today it is common to use facial recognition commercially for biometric identification. According to test conducted by National Institute of Standards and Technology, the highest performing facial identification algorithm as of April 2020 has an error rate of 0.08% compared to 4.1% of highest performing in 2014. [3] Though these improvements are commendable, concerns arise when attempting to apply these algorithms on high stake issues such as criminality.

Tech to Prision Pipeline

On May 5th 2020, Harrisburg University announced that a publication entitled, “A Deep Neural Network Model to Predict Criminality Using Image Processing” is being finalized. In this publication, a group a of Harrisburg University professors and a Ph.D student claim to have developed an automated computer facial recognition software capable of predicting whether someone is likely going to be a criminal. [4] This measure of criminality is said to have an 80% accuracy with no racial bias just by using a picture of an individual’s face. Data being used behind the software is biometric and criminal legal data provided by the New York City Police Department (NYPD). While the intent of this software is to help prevent crime, it caught the eye of 2,435 academics that signed an open letter demanding the research remains unpublished.

Those that signed the open letter, the Coalition for Critical Technology (CCT), raised concerns over the data used to create the algorithm. The CCT argue that data generated by the criminal justice system cannot be used for classifying criminality as the data would unreliable. [5] The dataset contains history of racially bias and unjust convictions which will feed that same bias into the algorithm. Another study, _”The ‘Criminality from Face’ Illusion”_, looking into the plausibility of using predicting criminality with facial recognition asserts, “there is no coherent definition on which to base development of such an algorithm. Seemingly promising experimental results in criminality-from-face are easily accounted for by simple dataset bias”. [2] A study conducted by the National Criminal Justice Reference Service concluded that for sexual assault alone, wrongful conviction occurred at at rate of 11.6%. [6] The use of unreliable data to classify an individual’s likelihood to commit crimes is harmful as it would validate unjust practices that have occurred over the years.

If an individual was wrongly convicted awaiting to be exonerated, their family members or those that look like them might be labeled as “likely” to commit crimes. The study announced by Harrisburg University has since been pulled from the publication public discussion and the CCT.

Resurgence of Physiognomy

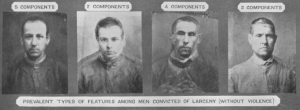

While use of facial recognition algorithms as a predictor is relatively new, the practice of using outer appearance to predict characteristics, __physiognomy__, dates back to the 18th century. [1] Physiognomy, in the past, has been used to promote racial bigotry, block immigration, justify slavery, and permit genocide. While physiognomy has been disproven, the pseudo science seems to be on the rise with the increase uses of facial recognition. The issue with Physiognomy lies in the belief that physical features are a good indicators for complex human behavior. The simplistic belief is problematic in that it skips several levels of abstraction, ignoring the…role of learning and environmental factors in human development. [2] I don’t believe predicting criminality in a vacuum is not harmful, though given the history of physiognomy, predicting criminality seems to be regressive.

Conclusion

The use of facial features as an identifier for criminality is inherently bias as it means accepting the assumption that individuals with certain facial features are more likely to commit crime. With the knowledge that bias exists within our criminal justice system it is irresponsible to recommend the use of criminal justice data to predict criminality. The implication of an algorithm being able to predict criminality is frightening as it could be used to further unjust actions.

Open Ended Thought Experiment in Predicting Criminality

What would the world look if an algorithm has reliable data and is 100% accurate at predicting criminality?

– If a child were to be born into this world with all of the features that classify as “likely to commit crime”; should that child be monitored?

– What rights would that child have to their own privacy if the algorithm is certain that the child will be a criminal?

– What does it mean for the future of child, should they be denied rights due to this classification?

References

[1] Arcas, Blaise Aguera y, et al. “Physiognomy’s New Clothes.” _Medium_, Medium, 20 May 2017, https://medium.com/@blaisea/physiognomys-new-clothes-f2d4b59fdd6a.

[2] Bowyer, Kevin W., et al. “The ‘Criminality from Face’ Illusion.” _IEEE Transactions on Technology and Society_, vol. 1, no. 4, 2020, pp. 175–183., https://doi.org/10.1109/tts.2020.3032321.

[3] Crumpler, William. “How Accurate Are Facial Recognition Systems – and Why Does It Matter?” _How Accurate Are Facial Recognition Systems – and Why Does It Matter? | Center for Strategic and International Studies_, 16 Feb. 2022, https://www.csis.org/blogs/technology-policy-blog/how-accurate-are-facial-recognition-systems-%E2%80%93-and-why-does-it-matter#:~:text=Facial%20recognition%20has%20improved%20dramatically,Standards%20and%20Technology%20(NIST).

[4] “Hu Facial Recognition Software Predicts Criminality.” _Harrisburg University_, 5 May 2020, https://web.archive.org/web/20200506013352/https://harrisburgu.edu/hu-facial-recognition-software-identifies-potential-criminals/.

[5] Technology, Coalition for Critical. “Abolish the #TechToPrisonPipeline.” _Medium_, Medium, 21 Sept. 2021, https://medium.com/@CoalitionForCriticalTechnology/abolish-the-techtoprisonpipeline-9b5b14366b16.

[6] Walsh, Kelly, et al. _The Author(s) Shown below Used Federal Funding Provided by …_ Office of Justice Programs, 1 Sept. 2017, https://www.ojp.gov/pdffiles1/nij/grants/251115.pdf.