The best VR experience I had with HTC Vive is that it feels so real with the vision simulation that I didn’t dare to step too close or step beyond a simulated robot standing right in front of me. Even though I thought some part of my rationality at that time still knew that VR is a fictional environment, but my eyes have believe there is a robot right in front of me that I can’t just step across its body.

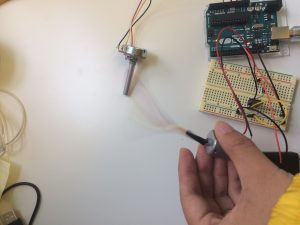

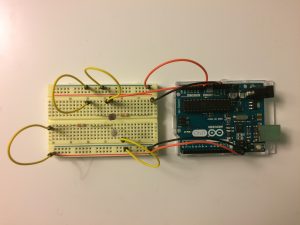

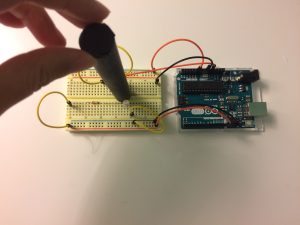

However, when I finally decided to push my hand against that robot, there is no resistant force prevent my hand moving through the robot body. This could be something to be enhanced. For example, haptics could be used to enhance this part of the VR experience. One illustration is the video “Haptic Retargeting: Dynamic Repurposing of Passive Haptics for Enhanced Virtual Reality”, where a single box could create realistic haptic feedback.

There are also some down sides of my experience with HTC Vive. I feel that I lost my senses of orientation and direction in the real world when I was immersed in the VR environment. This causes issues since I couldn’t perceive through vision that I was probably too close to a wall or if I messed up with the cables on the ground. However, to solve this issue, I feel that the mixed reality technology developed by Magic Leap could be a great opportunity. By overlapping virtual reality with the real environment, it makes user feel more realistic and always being aware of what’s going on in the surrounding real environment.