“That’s A Blatant Copy!”: Amazon’s Anticompetitive Behavior and Its Impacts on Privacy

By Mike Varner | October 27, 2022

Amazon’s function as both a marketplace and competitor has been under the microscope of both European and American regulators for the past few years and just recently the company attempted to skirt fines, unsuccessfully so far, by promising to makes changes. In 2019, European regulators opened an investigation over concerns that the data Amazon collects on its merchants was being used to dimmish competition by advantaging Amazon’s own private-label products [1].

What’s Private-Label?

Private-label refers to a common practice among retailers to distribute their own products to compete with other sellers. A 2020 a Wall Street Journal investigation found that, in interviews with over 20 former private-label employees, Amazon uses merchants’ data when they develop and sell their own competing products. This evidence runs contrary to not only their stated policies, but also to what spokespeople for the company attested to in Congressional hearings. Merchant data helped employees to know which product features were important to copy, how to price each product, and anticipated profit margins. Former employees demonstrated how using merchant data, for a car trunk organizer, Amazon used sales and marketing data to ensure that private-label could deliver higher margins [2].

[Image 1] Mallory Brangan https://www.cnbc.com/2022/10/12/amazons-growing-private-label-business-is-challenge-for-small-brands.html

Privacy Harms

Amazon claimed that employees were prohibited from using these data in offering private-label products and launched an internal investigation into the matter. The company claimed there were restrictions in place to keep private-label executives from accessing merchant data, but interviews revealed that use of these data was common practice and openly discussed in meetings. Even when regulations were enforced, managers would often “go over the fence” by asking analysts to create reports which divulged the information or to even create fake “aggregated” data which would secretly only contain a single merchant. It’s clear that these business practices are unfair and deceptive, to merchants, as they are demonstrably false relative to the company’s written policies and verbal communication to Congress. The FTC should consider this in its ongoing investigations as unfair and deceptive business practices are within its purview [3]. Other agencies such as the SEC are looking into this matter and the USDOJ is investigating the company for obstructing justice in relation to their 2019 Congressional hearings [4].

[Image 2] Nate Sutton, an Amazon associate general counsel, told Congress in July: ‘We don’t use individual seller data directly to compete’. [2]

Search Rank Manipulation

Amazon has made similarly concerning statements about their search rank algorithm over the years. Amid internal dissent in 2019, Amazon changed its product search algorithm to highlight more profitable, for Amazon, products. Internal counsel initially rejected a proposal to directly add profit into the search rank algorithm amid ongoing European investigations and concerns that the change would not be in customers’ best interest (a guiding principle for Amazon). Despite explicit exclusion of profit as a variable into the algorithm, former employees indicated that engineers would simply add enough new variables to proxy for profit. To test this, engineers would run A-B tests to calculate how to proxy for profit and unsurprisingly they found something that worked. They backed solved, using a variety of variables, for profit to have the best of both worlds: to “truthfully” represent that they don’t use profit and to use a completely equivalent composite metric. As one of the many checks prior to changing the algorithm, engineers were explicitly prevented from including variables that decreased profitability metrics. In total, while it could be strictly true that the search rank algorithm does not include profit, Amazon has optimized for profitability through a series of incentive structures [5]. Regulators should consider this deceptive practice as part of their ongoing investigations as simply not including profit obfuscates the complexities Amazon has gone through to achieve their desired outcome.

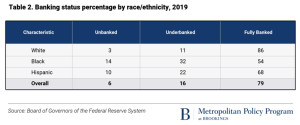

[Image 3] Jessica Kuronen [5]

What’s Next?

Amazon attempted in July to end their European antitrust investigations by offering to stop collecting nonpublic data on merchants [6]. Amazon hopes that this concession would prevent regulators from issuing fines, but this proposal has been met with strong criticism from a variety of groups [7]. The outcome of this proposal is pending, but similar litigation continues. Just last week UK regulators filed similar antitrust claims against Amazon over its “buy box” [8]. Amazon has been considering getting out of the private-label business due to lower-than-expected sales and leadership has been slowly downsizing the private label business over the past few years [9].

References:

- https://ec.europa.eu/commission/presscorner/detail/pl/ip_19_4291

- https://www.wsj.com/articles/amazon-scooped-up-data-from-its-own-sellers-to-launch-competing-products-11587650015?mod=article_inline

- https://www.wsj.com/articles/amazon-competition-shopify-wayfair-allbirds-antitrust-11608235127?mod=article_inline

- https://www.retaildive.com/news/house-refers-amazon-to-doj-for-potential-criminal-conduct/620246/

- https://www.wsj.com/articles/amazon-changed-search-algorithm-in-ways-that-boost-its-own-products-11568645345

- https://www.nytimes.com/2022/07/14/business/amazon-europe-antitrust.html

- https://techcrunch.com/2022/09/12/amazon-eu-antitrust-probe-weak-offer/

- https://techcrunch.com/2022/10/20/amazon-uk-buy-box-claim-lawsuit/

- https://www.wsj.com/articles/amazon-has-been-slashing-private-label-selection-amid-weak-sales-11657849612