Hey Alexa, where’s my data going?

Anonymous | June 23, 2022

In exchange for comfort and convenience, households who opt for smart home devices like Amazon Alexa hand off a surprising amount of personal data and security – but how much, exactly?

Firstly, what are smart home devices? They can range anywhere from gaming systems to refrigerators, and most importantly the common thread between them is that they need to connect to the Internet to fully function. Many of these devices are touted to help improve and streamline your day-to-day life, with one’s smartphone often used as the remote control.[1] Well-known choices include Amazon Alexa/Echo, Google Home and Nest, Ring doorbells, and Samsung Smart TVs.

You will find more infographics at Statista

Fig. 1: An infographic showing the most popular smart home speakers.

They have gained popularity in recent years and have also faced pushback from several concerned populations, yet interestingly as the Consumers International survey shows, 63% of people surveyed distrust smart/connected devices due to how they collect data on people and their behaviors, yet about 72% of people surveyed own at least one smart device.[2] In another survey conducted by CUJO AI, a whopping 98% of 4,000 participants expressed concerns over privacy with smart home devices, yet a good half of them did not take the requisite steps to still get them or don’t take the necessary precautions to secure themselves and their devices.[3] I use Nissenbaum’s contextual integrity to investigate prominent privacy risks from one of the top smart home devices, Amazon Alexa.

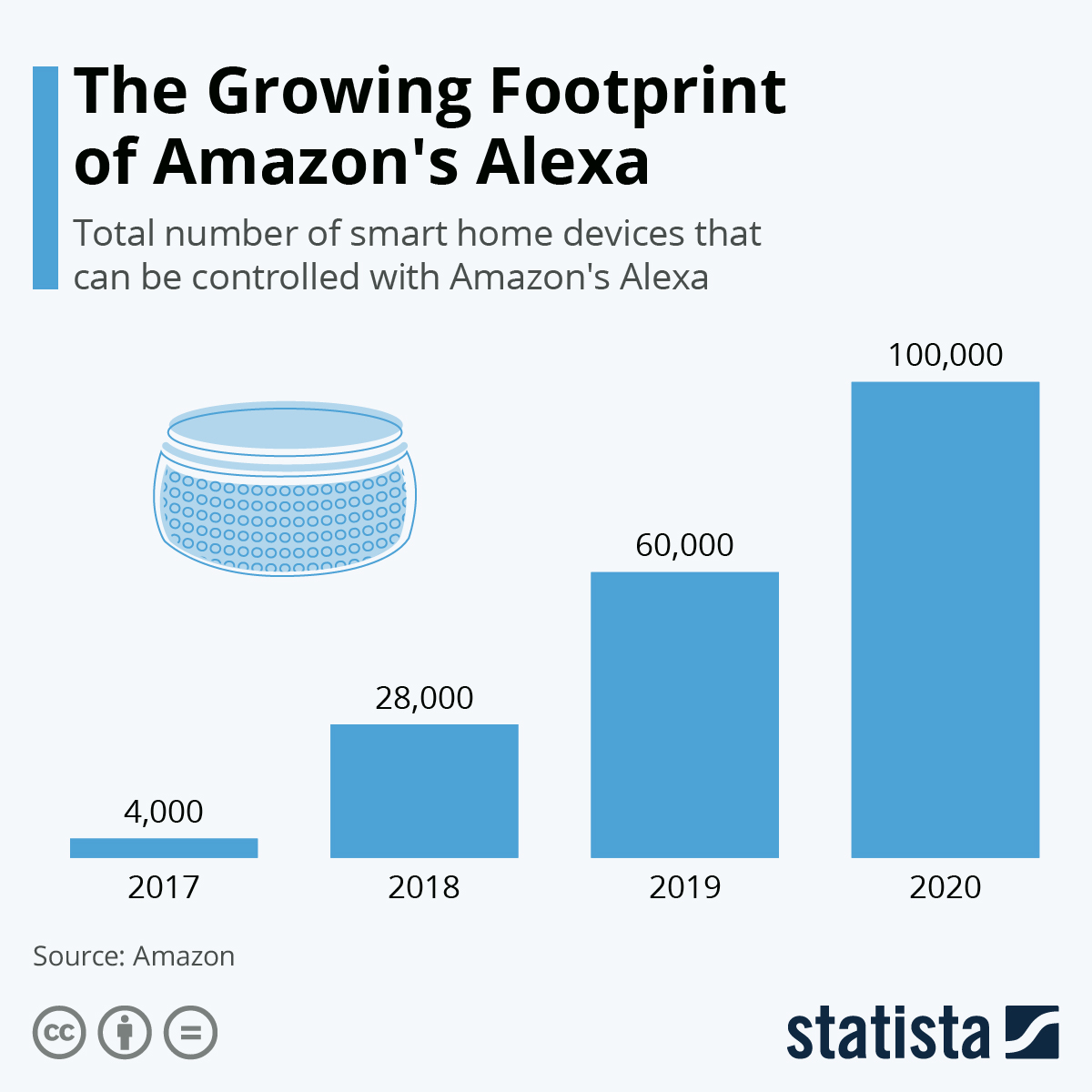

You will find more infographics at Statista

Fig. 2: An infographic of how many smart home devices Alexa can control.

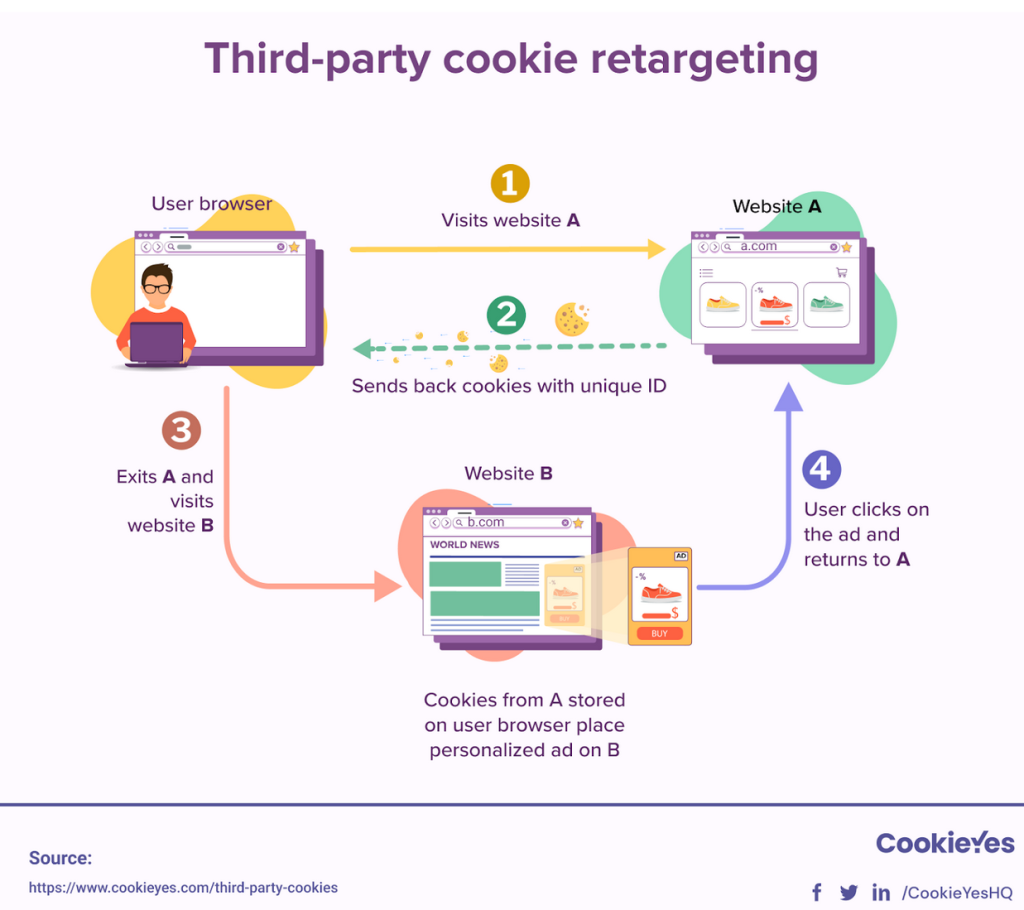

Personal information is collected by smart home devices for as long as they are in operation, which could mean 24/7 insight into someone’s life, and with Alexa, it was found in a study by Lentzsch et al. that skills, the commands and applications that Alexa could be installed with for different functions, had several privacy issues that could lead to third parties gaining personal information.[4] For example, fraud was a risk on the skills store due to the fact that an unrelated party could mask itself as a reputable organization, and when a user downloaded and used their skill, their personal data would go to this third party instead of the expected organization. Another oversight is that while Amazon required publishers of skills to have a public privacy policy detailing their intent of collecting and using personal data, 23.3% out of about a thousand skills had incredibly opaque policies or none at all, and still got access to personal information through Alexa.[4] Using Nissenbaum’s framework of contextual integrity, we can categorize the data subject and sender of the data as the user of the smart home device, the primary recipient of the data as Amazon Alexa , the information type as personal information such as shopping and living habits and voice, and the transmission principle as through the smart home devices and the Internet.[5] The context of the transmission principle of this personal data, intended for Alexa’s use, could now be leaked to third parties unknowingly and has been compromised.

This is not to say that smart home devices are all bad, as they indubitably provide lifestyle benefits, especially to those who may be disabled or otherwise disadvantaged. The tangible benefits do not outweigh the current privacy costs, however, and thus there needs to be more work done to protect people and their information, whether the work is legal, technological, and/or ethical. We can start by spreading more awareness on exactly how much agency people have over their personal data and privacy, and giving people the right to control them. Additionally, companies of smart home devices should take ownership of making privacy policies and disclosures more digestible and transparent for the average consumer, as well as allowing them to opt out of data harvesting.[6]

Hey Alexa, opt me out of data collection!

Positionality and Reflexivity Statement

I am an Asian American, middle class, cisgender woman. I have a smartphone, a smart watch, and a gaming system, but my household does not have any other smart home devices like TVs and kitchen appliances. Prior to this post, I was already wary of smart home devices and my stance remains the same. However, I have utilized several applications and features like fitness tracking on both my phone and watch that likely collected my data points and could be compromised. Moving forward, I will be more judicious of my smart device use and protect myself where possible, even in this increasingly data-driven world with little privacy where it seems like one’s every step is being watched. I encourage you to evaluate your relationship with your smart home devices and take your privacy into your own hands.

References

[1]Kaspersky. (n.d.). How safe are smart homes? Retrieved 2022, from https://usa.kaspersky.com/resource-center/threats/how-safe-is-your-smart-home

[2]Consumers’ International and Internet Society. (2019, May). The Trust Opportunity: Exploring Consumers’ Attitudes to the Internet of Things. Retrieved 2022, from https://www.internetsociety.org/wp-content/uploads/2019/05/CI_IS_Joint_Report-EN.pdf

[3]CUJO AI. (2021, October). Cybersecurity Perceptions Survey. Retrieved 2022, from https://cujo.com/wp-content/uploads/2021/10/Cybersecurity-Perceptions-Survey-2021.pdf

[4]Lentzsch, C. et al. (2021, February). Hey Alexa, is this Skill Safe?: Taking a Closer Look at the Alexa Skill Ecosystem. Retrieved 2022, from https://anupamdas.org/paper/NDSS2021.pdf

[5]Nissenbaum, H. F. (2011). A Contextual Approach to Privacy Online. Daedalus 140 (4), Fall 2011: 32-48, Available at SSRN: https://ssrn.com/abstract=2567042

[6]Tariq, A. (2021, January 21). The Challenges and Security Risks of Smart Home Devices. Retrieved 2022, from https://www.entrepreneur.com/article/362497Images

[Fig. 1] https://www.statista.com/chart/16068/most-popular-smart-speakers-in-the-us/

[Fig. 2] https://www.statista.com/chart/22338/smart-home-devices-compatible-with-alexa/