The Unequal American Dream: Hidden Bias in Mortgage Lending AI/ML Algorithms

By Autumn Rains | September 17, 2021

Owning a home in the United States is a cornerstone of the American Dream. Despite the economic downturn from the Covid-19 pandemic, the U.S. housing market saw double-digit growth rates in home pricing and equity appreciation in 2021. According to the Federal Housing Finance Agency, U.S. house prices grew 17.4 percent in the second quarter of 2021 versus 2020 and increased 4.9 percent from the first quarter of 2021 (U.S. House Price Index Report, 2021). Given these figures, obtaining a mortgage loan has further become vital to the home buying process for potential homeowners. With advancements in Machine Learning within financial markets, mortgage lenders have opted to introduce digital products to speed up the mortgage lending process and serve a broader, growing customer base.

Unfortunately, the ability to obtain a mortgage from lenders is not equal for all potential homeowners due to bias within the algorithms of these digital products. According to the Consumer Financial Protection Bureau (Mortgage refinance loans, 2021):

“Initial observations about the nation’s mortgage market in 2020 are welcome news, with improvements in the overall volume of home-purchase and refinance loans compared to 2019,” said CFPB Acting Director Dave Uejio. “Unfortunately, Black and Hispanic borrowers continued to have fewer loans, be more likely to be denied than non-Hispanic White and Asian borrowers, and pay higher median interest rates and total loan costs. It is clear from that data that our economic recovery from the COVID-19 pandemic won’t be robust if it remains uneven for mortgage borrowers of color.”

New Levels of Discrimination? Or Perpetuation of History?

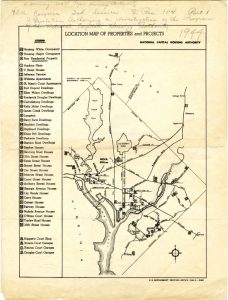

Exploring the history of mortgage lending in the United States, discrimination based on race has been an undertone in our history. Housing programs under ‘The New Deal’ in 1933 were forms of segregation. People of color were not included in new suburban communities and instead placed into urban housing projects. The following year, the Federal Housing Administration (FHA) was established and created a policy known as ‘redlining.’ This policy furthered segregation for people of color by refusing to issue mortgages for properties in or near African-American neighborhoods. While this policy was in effect, the FHA also offered subsidies for builders who prioritized suburban development project builds, requiring that builders sold none of these homes to African-Americans (Gross, 2017).

Bias in the Algorithms

Researchers at UC Berkeley Haas School of Business discovered that black and Latino borrowers were charged higher interest rates of 7.9 bps both online and in-person in 2019 (Public Affairs & Affairs, 2018). Similarly, The Markup also explored this bias in mortgage lending and found the following about national loan rates:

Holding 17 different factors steady in a complex statistical analysis of more than two million conventional mortgage applications for home purchases, we found that lenders were 40 percent more likely to turn down Latino applicants for loans, 50 percent more likely to deny Asian/Pacific Islander applicants, and 70 percent more likely to deny Native American applicants than similar White applicants. Lenders were 80 percent more likely to reject Black applicants than similar White applicants. […] In every case, the prospective borrowers of color looked almost exactly the same on paper as the White applicants, except for their race.

Mortgage lenders approach the digital lending process similarly to traditional banks regarding risk evaluation criteria. These criteria include income, assets, credit score, current debt, and liabilities, among other factors in line with federal guidelines. The Consumer Finance Protection Bureau issued guidelines after the last recession to reduce the risk of predatory lending to consumers. (source) If a potential home buyer does not meet these criteria, they are classified as a risk. These criteria do tend to put people of color at a disadvantage. For example, credit scores are typically calculated based on individual spending and payment habits. Rental payments are typically the most significant payment individuals pay routinely, but these generally are not reported to credit bureaus by landlords. According to an article in the New York Times (Miller, 2020), more than half of Black Americans pay rent. Alanna McCargo, Vice President of housing finance policy at the Urban Institute, further elaborates within the article:

“We know the wealth gap is incredibly large between white households and households of color,” said Alanna McCargo, the vice president of housing finance policy at the Urban Institute. “If you are looking at income, assets and credit — your three drivers — you are excluding millions of potential Black, Latino and, in some cases, Asian minorities and immigrants from getting access to credit through your system. You are perpetuating the wealth gap.” […] As of 2017, the median household income among Black Americans was just over $38,000, and only 20.6 percent of Black households had a credit score above 700.”

Remedies for Bias

Potential solutions to reduce hidden bias in the mortgage lending algorithms could include widening the data criteria used for risk evaluation decisions. However, some demographic factors about an individual cannot be considered according to the law. The Fair Housing Act of 1968 states that within mortgage underwriting, lenders cannot consider sex, religion, race, or marital status as part of the evaluation. However, these may be factors by proxy through variables like timeliness of bill payments, a part of the credit score evaluation previously discussed. If Data Scientists have additional data points beyond the scope of the recommended guidelines of the Consumer Finance Protection Bureau, should these be considered? If so, do any of these extra data points include bias directly or by proxy? These considerations pose quite a dilemma for Data Scientists, digital mortgage lenders, and companies involved in credit modeling.

Another potential solution in the digital mortgage lending process could be the inclusion of a diverse team of loan officers in the final step of the risk evaluation process. Until lenders can place higher confidence in the ability of AI/ML algorithms to reduce hidden bias, loan officers should be involved to ensure fair access for all consumers. Tangentially, alternative credit scoring models that include rental history payments should be considered by Data Scientists at mortgage lenders with digital offerings. By doing so, lenders can create a more holistic picture of potential homeowners’ total spending and payment history. This would allow all U.S. residents the equal opportunity to pursue the American dream of homeownership in a time when working from home is a new reality.

Works Cited

- Gross, T. (2017, May 3). A ‘forgotten history’ of how the U.S. government segregated America. NPR. Retrieved September 17, 2021, from https://www.npr.org/2017/05/03/526655831/a-forgotten-history-of-how-the-u-s-government-segregated-america.

- Miller, J. (2020, September 18). Is an algorithm less racist than a loan officer? The New York Times. Retrieved September 17, 2021, from https://www.nytimes.com/2020/09/18/business/digital-mortgages.html.

- Mortgage refinance loans drove an increase in closed-end originations in 2020, new CFPB report finds. Consumer Financial Protection Bureau. (2021, August 19). Retrieved September 17, 2021, from https://www.consumerfinance.gov/about-us/newsroom/mortgage-refinance-loans-drove-an-increase-in-closed-end-originations-in-2020-new-cfpb-report-finds/.

- Public Affairs, U. C. B. N. 13, & Affairs, P. (2018, November 13). Mortgage algorithms perpetuate racial bias in lending, study finds. Berkeley News. Retrieved September 17, 2021, from https://news.berkeley.edu/story_jump/mortgage-algorithms-perpetuate-racial-bias-in-lending-study-finds/.

- U.S. House Price Index Report 2021 Q2. U.S. House Price Index Report 2021 Q2 | Federal Housing Finance Agency. (2021, August 31). Retrieved September 17, 2021, from https://www.fhfa.gov/AboutUs/Reports/Pages/US-House-Price-Index-Report-2021-Q2.aspx.

Image Sources

- Picture 1: https://www.dcpolicycenter.org/wp-content/uploads/2018/10/Location_map_of_properties_and_projects-778×1024.jpg

- Picture 2: https://static01.nyt.com/images/2019/12/08/business/06View-illo/06View-illo-superJumbo.jpg