Ethical Implication of Generative AI

By Gabriel Hudson | April 1, 2019

Generative data models are rapidly growing in popularity and sophistication in the world of artificial intelligence (AI). Rather than using existing data to classify an individual or predict some aspect of a dataset these models actually generate new content. Recently developments in generative data modeling have begun to blur lines not only between real and fake, but also between machine and human generated content creating a need to look at the ethical issues that arise as the technologies evolve.

Bots

Bots are an older technology that has already been used over a large range of functions such as automated customer service or directed personal advertising. Bots are generative (almost exclusively creating language), but historically have been very narrow in function and limited to small interaction on a specified topic. In May of 2018 Google debuted a Bot system called Duplex that was able to successfully “fool” a significant number of test subjects while carrying out daily tasks such as booking restaurant reservations and making a hair salon appointment (link). This, combined with ubiquity of digital assistants, sparked a resurgence in bot advancement.

Deepfake

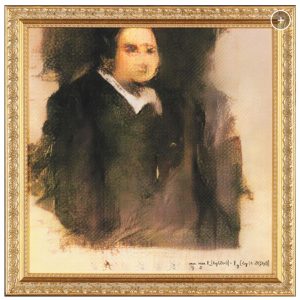

In this case Deepfake is a generalized term used to describe very realistic “media” (such images, videos, music, and speech) created with an AI technology know as a Generative Adversarial Network (GAN). GANs were originally introduced in 2014 but came into prominence when a new training method was published in 2018. GANs represent the technology behind seemingly innocuous generated media such as the first piece of AI generated art sold (link):

as well as a much more harmful set of false pornographic videos created using celebrities faces

(link):

The key technologies in this area were fully release fully released to the public upon their completion.

Open AI’s GPT-2

In February 2019 Open AI (a non-profit AI research organization founded in part by Elon Musk) released a report claiming a significant technology breakthrough in generating human sounding text as well as promising sample results (link). Open AI, however, against longstanding trends in the field and their own history chose not to release the full model citing potential for misuse on a large scale. Similar to GPT-2, there have also been breakthroughs in generative technology in other media like images, that have been released to the public. All of the images in the subsequent frame were generated with technology developed by Nvidia.

In limiting access to a new technology Open AI brought to the forefront some discussions about how the rapid evolution of generative models must be handled. Now that almost indistinguishable “false” content can be generated in large volume with ease it is important to consider who is tasked with deciding and maintaining the integrity of online content. In the near future, discussions must be extended about the reality of the responsibilities of both consumers and distributors of data and the way their “rights” to know fact from fiction and human from machine may be changing.