Algorithmic Misclassification – the (Pretty) Good, the Bad, and the Ugly by Arnobio Morelix

Everyday, your identity and your behavior is algorithmically classified countless times. Your credit card transaction is labeled “fraudulent” or not. Political campaigns decide whether you are a “likely voter” for their candidate. You constantly claim and are judged on your identity of “not a robot” through captchas. Add to this the classification of your emails, the face recognition in your phone, the targeted ads you get, and it is easy to imagine hundreds of such classification instances per day.

For the most part, these classifications are convenient and pretty good for you and the organizations running them. So much so we can almost forget they exist, unless they go obviously wrong. I tend to get a lot of examples of these predictions working poorly. I am a Latino living in the U.S. and I often get ads in Spanish. Which would be pretty good targeting, except that I am a Brazilian Latino, and my native language is Portuguese, not Spanish.

![]()

Needless to say, this misclassification causes no real harm. My online behavior might look similar enough to the one of a native Spanish speaker living in U.S., and users like me getting mis-targeted ads may not be more than a rounding error. Although it is in no one’s interest that I get these ads — I am wasting my time, and the company is wasting money — the targeting is probably good enough.

This “good enough” mindset is at the heart of a lot of prediction applications in data science. As a field, we constantly put people in boxes to make decisions about them, even though we inevitably know predictions will not be perfect. “Pretty good” is fine most of the time — it certainly is for ad targeting.

But these automatic classifications can go from to good to bad to ugly fast — either because of scale of deployment or tainted data. As we go to higher stake fields beyond those they have arguably been perfected for — like social media and online ads — we get into problems.

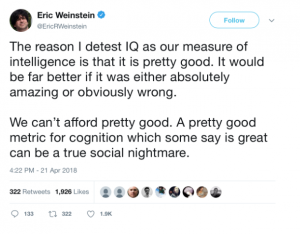

Take psychometric tests for example. Companies are increasingly using them to weed out candidates, with growth in usage, and 8 of the top 10 private employers in the U.S. using related pre-hire assessments. Some of these companies are reporting good results, with higher performance and lower turnover. [1] The problem is, these tests can be pretty good but far from great. IQ tests, a popular component of psychometric assessments, is a poor predictor of cognitive performance across many different tasks — though it is certainly correlated to performance in some of them. [2]

When a single company weeds out a candidate that would otherwise perform well, it may not be a big problem by itself. But it can be a big problem when the tests are used at scale, and a job seeker is consistently excluded from jobs they would perform well in. And while the use of these tests by a single private actor may well be justified on an efficiency for hiring basis, it should give us pause to see these tests used at scale for both private and public decision making (e.g., testing students).

Problems with “pretty good” classifications also arise from blind spots in the prediction, as well as tainted data. Somali markets in Seattle have been prevented by the federal government of accepting food stamps because many of their transactions looked fraudulent — with many infrequent, large dollar transactions driven by the fact that many families in the community they serve only shopped once a month, often sharing a car to do so (the USDA later reversed the decision). [3] [4] African American voters in Florida were disproportionately disenfranchised because their names were more often automatically matched to a felon’s names, because African Americans have a disproportionate share of common last names (a legacy of original names being stripped due to slavery). [5] Also in Florida, black crime defendants were more likely to be algorithmically classified as “high risk,” and among those defendants who did not reoffend, blacks were over twice as likely as whites to have been labelled risky. [6]

In all of these cases, there is not necessarily evidence of was malicious intent. The results can be explained by a mix of “pretty good” predictions and data reflecting previous patterns of discrimination — even if the people designing and applying the algorithms had no intention to discriminate.

While the examples I mentioned here had a broad range of technical sophistication, there’s no strong reason to believe the most sophisticated techniques are getting rid of these problems. Even the newest deep learning techniques excel at identifying relatively superficial correlations, not deep patterns or causal paths, as entrepreneur and NYU professor Gary Marcus explains in his January 2018 paper “Deep Learning: A Critical Appraisal.” []

The key problem of the explosion in algorithmic classification is the fact that we are invariably designing life around a sleuth of “pretty good” algorithms. “Pretty good” may be a great outcome for ad targeting. But when we deploy them at scale on applications from voter registration exclusions to hiring to loan decisions, the final outcome may well be disastrous.

References

[1] Weber, Lauren. “Today’s Personality Tests Raise the Bar for Job Seekers.” Wall Street Journal. https://www.wsj.com/articles/a-personality-test-could-stand-in-the-way-of-your-next-job-1429065001

[2] Hampshire, Adam et al. “Fractionating Human Intelligence.” https://www.cell.com/neuron/fulltext/S0896-6273(12)00584-3

[3] Davila, Florangela. “USDA disqualifies three Somalian markets from accepting federal food stamps.” Seattle Times. http://community.seattletimes.nwsource.com/archive/?date=20020410&slug=somalis10m

[4] Parvas, D. “USDA reverses itself, to Somali grocers’ relief.” Seattle Post-Intelligencer. https://www.seattlepi.com/news/article/USDA-reverses-itself-to-Somali-grocers-relief-1091449.php

[5] Stuart, Guy. “Databases, Felons, and Voting: Errors and Bias in the Florida Felons Exclusion List in the 2000 Presidential Elections.” Harvard University, Faculty Research Working Papers Series.

[6] Corbe-Davies, Sam et al. “Algorithmic decision making and the cost of fairness.” https://arxiv.org/abs/1701.08230

[7] Marcus, Gary. “Deep Learning: A Critical Appraisal.” https://arxiv.org/abs/1801.00631