Description:

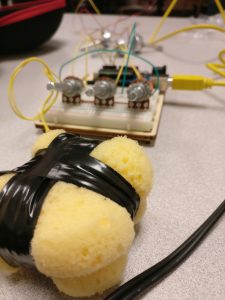

I tried to use Particles by Daniel Shiffman to create an interesting visualization using 3 potentiometers for adjusting the RGB values of the foreground particles as well as the background color and used the Force Sensor resistor to adjust the transparency of the particles. I used two artist’s sponge to create a diffusion pad for the force sensor. On squeezing it vertically, the pressure is increased and so is the opacity of the particles. On squeezing it horizontally however, the pressure is relieved and the opacity is reduced.

Components:

1 Arduino UNO

1 FSR

3 Potentiometers

1 10k Ohm resistor

2 Artist sponges for force diffusion

Insulation tape

Arduino Code:

// Explosions in the Sky with an FSR, 3 pots and an Arduino UNO

// Code by Ganesh Iyer, UC Berkeley

// Date: 27th September, 2016

int fsrPin = A0; // the cell and 10K pulldown are connected to a0

int fsrReading; // the analog reading from the sensor divider

// The RGB Pots

// NOTE: Put in the right pin here with the A(x) prefix

int potRedPin = A3;

int potRedReading;

int potGreenPin = A2;

int potGreenReading;

int potBluePin = A1;

int potBlueReading;

void setup() {

// put your setup code here, to run once:

Serial.begin(9600);

}

void loop() {

// put your main code here, to run repeatedly:

// obtain input from FSR to send to Processing

potRedReading = analogRead(potRedPin);

potRedReading = potRedReading/4;

Serial.print(potRedReading);

Serial.print(",");

potGreenReading = analogRead(potGreenPin);

potGreenReading = potGreenReading/4;

Serial.print(potGreenReading);

Serial.print(",");

potBlueReading = analogRead(potBluePin);

potBlueReading = potBlueReading/4;

Serial.print(potBlueReading);

Serial.print(",");

fsrReading = analogRead(fsrPin);

fsrReading = fsrReading/4;

Serial.print(fsrReading);

Serial.print(",");

Serial.print(".");

delay(100);

}

Processing Code:

// Explosions in the Sky by Ganesh Iyer

// Time: Ungodly

// Date: 27th September, 2016

// Based on Particles, by Daniel Shiffman.

// The idea is to use FSR to vary the alpha values of explosion

// Use the potentiometers to vary colors

// Processing Setup

import processing.serial.*;

String portname = "/dev/cu.usbmodem1411"; // or "COM5"

Serial port;

String buf="";

int cr = 13; // ASCII return == 13

int lf = 10; // ASCII linefeed == 10

color colorOfParticles;

float hexCode;

float r = 0;

float g = 0;

float b = 0;

float fsr = 0;

int serialVal = 0;

ParticleSystem ps;

PImage sprite;

void setup() {

port = new Serial(this, portname, 9600);

size(1024, 768, P2D);

orientation(LANDSCAPE);

sprite = loadImage("sprite.png");

ps = new ParticleSystem(10000);

// Writing to the depth buffer is disabled to avoid rendering

// artifacts due to the fact that the particles are semi-transparent

// but not z-sorted.

hint(DISABLE_DEPTH_MASK);

}

void draw () {

serialEvent(port);

background((255-r), (255-g), (255-b));

ps.update();

ps.display();

ps.setEmitter(width/2,(height/2)-200);

fill(255);

}

void serialEvent(Serial p){

String arduinoInput = p.readStringUntil(46);

if(arduinoInput != null ){

arduinoInput = trim(arduinoInput);

float inputs[] = float(split(arduinoInput, ','));

r = inputs[0];

g = inputs[1];

b = inputs[2];

fsr = inputs[3];

}

}

class Particle {

PVector velocity; // do not change this

float lifespan;

// Verdict: Derive from FSR; this is alpha value. As long as the FSR is pressed, this will keep generating particles

// If FSR is not pressed at all, the alpha value will be 0 and you can't see the particles anyway.

// Hence, you do not require a mouseclick variable.

// Verdict: Derive from the RGB Pots.

PShape part;

float partSize;

// Do not change

PVector gravity = new PVector(0,0.1);

// Do not change

Particle() {

partSize = random(10,60);

part = createShape();

part.beginShape(QUAD);

part.noStroke();

part.texture(sprite);

part.normal(0, 0, 1);

part.vertex(-partSize/2, -partSize/2, 0, 0);

part.vertex(+partSize/2, -partSize/2, sprite.width, 0);

part.vertex(+partSize/2, +partSize/2, sprite.width, sprite.height);

part.vertex(-partSize/2, +partSize/2, 0, sprite.height);

part.endShape();

rebirth(width/2,height/2);

lifespan = random(255);

}

PShape getShape() {

return part;

}

void rebirth(float x, float y) {

float a = random(TWO_PI);

float speed = random(0.5,4);

velocity = new PVector(cos(a), sin(a));

velocity.mult(speed);

lifespan = 255;

part.resetMatrix();

part.translate(x, y);

}

boolean isDead() {

if (lifespan < 0) {

return true;

} else {

return false;

}

}

public void update() {

lifespan = lifespan - 1;

velocity.add(gravity);

// setTint(rgb value, alpha value) replace 255 with particleColor

part.setTint(color(r, g, b, fsr));

// This is setting color to white all the time.

part.translate(velocity.x, velocity.y);

}

}

class ParticleSystem {

ArrayList particles;

PShape particleShape;

float red;

float green;

float blue;

ParticleSystem(int n) {

particles = new ArrayList();

particleShape = createShape(PShape.GROUP);

for (int i = 0; i < n; i++) {

Particle p = new Particle();

particles.add(p);

particleShape.addChild(p.getShape());

}

}

void update() {

for (Particle p : particles) {

p.update();

}

}

void setEmitter(float x, float y) {

for (Particle p : particles) {

if (p.isDead()) {

p.rebirth(x, y);

}

}

}

void display() {

shape(particleShape);

}

}

Video of the visualization